Ask a big language mannequin (LLM) like GPT-4 to odor a rain-soaked campsite, and it’ll politely decline. Ask the identical system to explain that scent to you, and it’ll wax poetic about “an air thick with anticipation” and “a scent that’s each recent and earthy,” regardless of having neither prior expertise with rain nor a nostril to assist it make such observations. One potential rationalization for this phenomenon is that the LLM is solely mimicking the textual content current in its huge coaching information, reasonably than working with any actual understanding of rain or odor.

However does the shortage of eyes imply that language fashions can’t ever “perceive” {that a} lion is “bigger” than a home cat? Philosophers and scientists alike have lengthy thought of the power to assign which means to language a trademark of human intelligence — and contemplated what important substances allow us to take action.

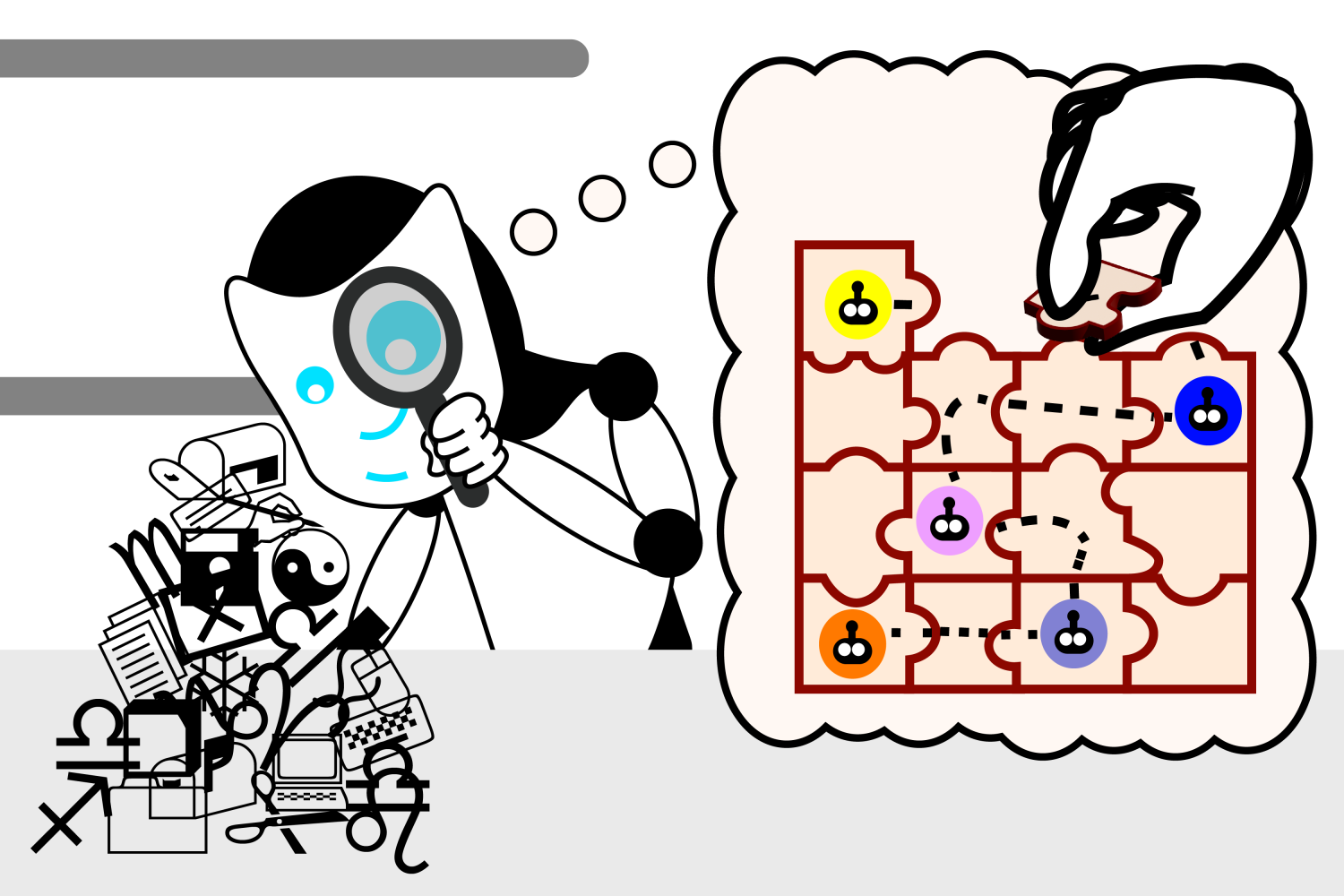

Peering into this enigma, researchers from MIT’s Pc Science and Synthetic Intelligence Laboratory (CSAIL) have uncovered intriguing outcomes suggesting that language fashions could develop their very own understanding of actuality as a method to enhance their generative talents. The group first developed a set of small Karel puzzles, which consisted of arising with directions to regulate a robotic in a simulated surroundings. They then educated an LLM on the options, however with out demonstrating how the options truly labored. Lastly, utilizing a machine studying method known as “probing,” they seemed contained in the mannequin’s “thought course of” because it generates new options.

After coaching on over 1 million random puzzles, they discovered that the mannequin spontaneously developed its personal conception of the underlying simulation, regardless of by no means being uncovered to this actuality throughout coaching. Such findings name into query our intuitions about what forms of data are needed for studying linguistic which means — and whether or not LLMs could sometime perceive language at a deeper degree than they do right this moment.

“In the beginning of those experiments, the language mannequin generated random directions that didn’t work. By the point we accomplished coaching, our language mannequin generated right directions at a fee of 92.4 %,” says MIT electrical engineering and laptop science (EECS) PhD pupil and CSAIL affiliate Charles Jin, who’s the lead writer of a new paper on the work. “This was a really thrilling second for us as a result of we thought that in case your language mannequin may full a activity with that degree of accuracy, we would anticipate it to know the meanings throughout the language as nicely. This gave us a place to begin to discover whether or not LLMs do in reality perceive textual content, and now we see that they’re able to rather more than simply blindly stitching phrases collectively.”

Contained in the thoughts of an LLM

The probe helped Jin witness this progress firsthand. Its position was to interpret what the LLM thought the directions meant, unveiling that the LLM developed its personal inner simulation of how the robotic strikes in response to every instruction. Because the mannequin’s means to resolve puzzles improved, these conceptions additionally grew to become extra correct, indicating that the LLM was beginning to perceive the directions. Earlier than lengthy, the mannequin was constantly placing the items collectively appropriately to kind working directions.

Jin notes that the LLM’s understanding of language develops in phases, very similar to how a toddler learns speech in a number of steps. Beginning off, it’s like a child babbling: repetitive and principally unintelligible. Then, the language mannequin acquires syntax, or the foundations of the language. This allows it to generate directions which may appear to be real options, however they nonetheless don’t work.

The LLM’s directions progressively enhance, although. As soon as the mannequin acquires which means, it begins to churn out directions that appropriately implement the requested specs, like a toddler forming coherent sentences.

Separating the tactic from the mannequin: A “Bizarro World”

The probe was solely meant to “go contained in the mind of an LLM” as Jin characterizes it, however there was a distant chance that it additionally did a number of the considering for the mannequin. The researchers needed to make sure that their mannequin understood the directions independently of the probe, as an alternative of the probe inferring the robotic’s actions from the LLM’s grasp of syntax.

“Think about you have got a pile of knowledge that encodes the LM’s thought course of,” suggests Jin. “The probe is sort of a forensics analyst: You hand this pile of knowledge to the analyst and say, ‘Right here’s how the robotic strikes, now attempt to discover the robotic’s actions within the pile of knowledge.’ The analyst later tells you that they know what’s happening with the robotic within the pile of knowledge. However what if the pile of knowledge truly simply encodes the uncooked directions, and the analyst has discovered some intelligent strategy to extract the directions and comply with them accordingly? Then the language mannequin hasn’t actually discovered what the directions imply in any respect.”

To disentangle their roles, the researchers flipped the meanings of the directions for a brand new probe. On this “Bizarro World,” as Jin calls it, instructions like “up” now meant “down” throughout the directions shifting the robotic throughout its grid.

“If the probe is translating directions to robotic positions, it ought to have the ability to translate the directions in accordance with the bizarro meanings equally nicely,” says Jin. “But when the probe is definitely discovering encodings of the unique robotic actions within the language mannequin’s thought course of, then it ought to wrestle to extract the bizarro robotic actions from the unique thought course of.”

Because it turned out, the brand new probe skilled translation errors, unable to interpret a language mannequin that had completely different meanings of the directions. This meant the unique semantics had been embedded throughout the language mannequin, indicating that the LLM understood what directions had been wanted independently of the unique probing classifier.

“This analysis straight targets a central query in fashionable synthetic intelligence: are the stunning capabilities of enormous language fashions due merely to statistical correlations at scale, or do massive language fashions develop a significant understanding of the fact that they’re requested to work with? This analysis signifies that the LLM develops an inner mannequin of the simulated actuality, although it was by no means educated to develop this mannequin,” says Martin Rinard, an MIT professor in EECS, CSAIL member, and senior writer on the paper.

This experiment additional supported the group’s evaluation that language fashions can develop a deeper understanding of language. Nonetheless, Jin acknowledges just a few limitations to their paper: They used a quite simple programming language and a comparatively small mannequin to glean their insights. In an upcoming work, they’ll look to make use of a extra common setting. Whereas Jin’s newest analysis doesn’t define the way to make the language mannequin study which means sooner, he believes future work can construct on these insights to enhance how language fashions are educated.

“An intriguing open query is whether or not the LLM is definitely utilizing its inner mannequin of actuality to purpose about that actuality because it solves the robotic navigation drawback,” says Rinard. “Whereas our outcomes are in step with the LLM utilizing the mannequin on this method, our experiments should not designed to reply this subsequent query.”

“There’s plenty of debate lately about whether or not LLMs are literally ‘understanding’ language or reasonably if their success might be attributed to what’s basically methods and heuristics that come from slurping up massive volumes of textual content,” says Ellie Pavlick, assistant professor of laptop science and linguistics at Brown College, who was not concerned within the paper. “These questions lie on the coronary heart of how we construct AI and what we anticipate to be inherent prospects or limitations of our know-how. It is a good paper that appears at this query in a managed method — the authors exploit the truth that laptop code, like pure language, has each syntax and semantics, however in contrast to pure language, the semantics might be straight noticed and manipulated for experimental functions. The experimental design is elegant, and their findings are optimistic, suggesting that possibly LLMs can study one thing deeper about what language ‘means.’”

Jin and Rinard’s paper was supported, partly, by grants from the U.S. Protection Superior Analysis Tasks Company (DARPA).